Amazon, YouTube, and Pandora know what you like. They can predict it thanks to artificial intelligence. Furthermore, in the last few years A.I. has made significant advances in voice and facial recognition. As artificial intelligence continues to advance, it is likely to raise some interesting theological questions as it impinges on assumptions Christians may have about God and the soul. In the second part of the post, I will offer some preliminary theological reflection on this. But first, it is important to understand how artificial intelligence actually works.

1. HOW A.I. WORKS

The neural network, which is the foundation of artificial intelligence, is surprisingly simple and straightforward. There are some excellent resources on YouTube that explain how they work and how you can make your own. My favorite one is a series of 4 videos produced by 3BLUE1BROWN. The first video is entitled “What Is A Neural Network,” and may be found at youtube.com. I was able to watch these videos and program a neural network on my desktop computer that does very basic visual recognition. It can identify digits from zero to nine written by human beings. My network, I am happy to say, has an accuracy rate of over 95% over a sample of 10,000 test images that I downloaded from the MNIST (Modified National Institute of Standards and Technology) website: http://yann.lecun.com/exdb/mnist/.

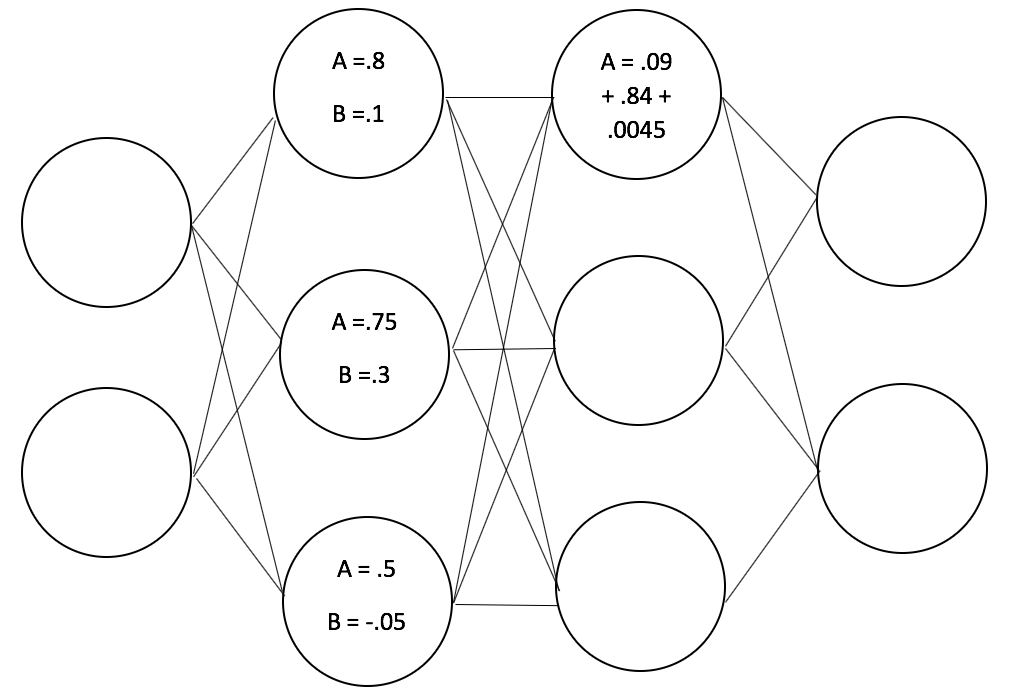

Here is how it works. The basic building block of the network is the “neuron,” which is simply a variable holding a numerical value (often between 0 and 1). This is the neuron’s “activation” value. Each neuron also has a “bias,” which can be changed to give the neuron more or less importance in the network. The network itself has multiple layers. In the case of the simple network in the diagram above, there are 4 layers. The column of neurons on the far left is the input layer. The column of neurons on the far right is the output layer. The two middle columns are the “hidden layers.” Each neuron in one layer is linked with each neuron in the next layer. Each neuron sends its activation value (combined with its bias) through the links to every neuron it is connected to in the next layer. Each link contains a “weight” which influences the value that is passed through the link. This is another way to change the relative importance of neurons in the network. Some links can be given more weight than others.

So in the network depicted above, the first neuron sends the value .9 (.8 + .1) through the link to each neuron in the next layer. Here let’s consider only the first neuron in the next layer. If we imagine that the link between these two neurons has a weight of .1, then the receiving neuron gets the value .09 (.9 x .1). The second neuron in the sending layer achieves the value of 1.05 (.75 + .3). If we imagine that the link has a weight of .8, then it sends the value .84 (1.05 x .8) to the receiving neuron. Finally, the third neuron in the sending layer has a value of .45 (.5 + (-.05)). If the link to the first neuron of the next layer has a weight of .01, then it sends the value .0045 (.45 x .01). The activation value of the first neuron in the next layer is then the sum of all the values sent to it, giving it an activation value of .9345 (.09 + .84 + .0045). The values pass in this fashion from the input layer on the left all the way to the output layer on the right. This kind of network is called a “feed forward” network.

If we reflect on the size of the numbers here, we can see that the weights and biases described above are calibrated in such a way that the second neuron has the most influence, and the third neuron has the least. Indeed the third neuron’s influence (.0045) is negligible. Since every value passed through its link is going to be multiplied by .01, it is unlikely that it will ever have a significant effect on the first neuron in the next layer. Of course, it may have significant influence on other neurons in that layer if its links to them have greater weight values.

In the network I built, there were also four layers. The input layer represented a digital image that was 28 x 28 pixels. Therefore, that layer had 784 neurons, one for each pixel, containing an activation value from 0 (black) to 1 (white). The two hidden layers each had 16 neurons. The output layer had ten neurons, each neuron representing a digit from zero to nine. The goal is that when a digit written by a human is converted into 784 pixels, and those pixels are fed into the input layer, the neuron representing the correct digit in the output layer will be set to 1 and the rest of the neurons in the output layer will be set to 0 (or values very close to those).

In order to achieve this, the network needs to be “trained.” This involves adjusting all the weights and biases so that the desired results are attained. Initially, the weights and biases are set randomly. Training occurs by feeding the network thousands of examples in which the correct answer is known. In my case, I used 60,000 training examples from the MNIST website. Each example was a digitized image of a digit written by a human being and paired with the digit the image is supposed to represent. At first the network gets the examples completely wrong since the weights and biases are calibrated randomly. However, since we know what values we want the output neurons to contain for each example, we can nudge the actual value of each output neuron a baby step closer to the desired value. Then we perform similar nudges on the previous layer and the layer before that. The process is called “back-propagating.” It requires some calculus and is the most complicated aspect of programming a neural network, but there are some good resources on the web for learning the calculus that is required. It turns out that if you nudge the output values in the direction of the correct answer for each example and then back-propagate the results over the previous layers and repeat the process tens of thousands of times, you end up with a network that has a rather sophisticated ability to recognize visual objects.

Take a look at the video at the end of this blog post to see the weird shaped numbers that the network can correctly identify.

After the network is trained, it is ready to be tested. Testing involves giving it examples it has never seen before and seeing how accurate it is. I used 10,000 testing examples from the same MNIST website, and my network attained a 95% accuracy rate on that sample set.

What is the significance of the “hidden layers,” i.e., those neurons that lie between the input and output layers? The intriguing thing is that no one knows. You might think that each layer is responsible for a different visual aspect of the digits. For example, the video produced by 3BLUE1BROWN asks you to imagine that the first hidden layer identifies edges and the second one identifies component parts of the digits. However, as the video later reveals, this is not the case. No one has so far been able to attach any “meaning” to the inner layers. We only know that they are necessary for the network to function correctly. It works, but we are not exactly sure how it works.

2. HOW A.I. DOES – AND DOES NOT – CHALLENGE US

I have two observations in light of all this. The first is that the machines are not likely to take over the world any time soon. When you see how neural networks actually work, at least in their most basic forms, it is clear that they are little more than a fairly complicated calculus problem. As one video put it, they are “more A. than I.” To push this a bit further, the basic ones are simple enough that it is possible for a hobbyist like me to program one.

But that leads to my second observation, which is that as artificial intelligence advances, it may call into question assumptions that Christians may have about God and the soul. If a mere calculus problem can exhibit visual recognition behavior, this raises the question of whether we ourselves are mere calculus problems, albeit much more complicated ones. The better A.I. can replicate human behaviors, the more forcefully this question will present itself.

I don’t think this is a serious challenge yet. Our experiences of thought and emotion are so complex that it seems unimaginable that they could be accounted for or truly replicated by artificial intelligence. Indeed, Christians may be tempted to argue for the existence of the creator on the grounds that mental processes are too complex to be explained by science; they can only be explained by God and the soul. While this argument may make sense now, I think it would be a mistake to put too much weight on it.

That is because it is more an appeal to intuition than an actual argument. The intuition is that it just seems right that an unimaginably complex phenomenon cannot be explained by anything less than an omniscient creator. But what if complex behaviors can in fact arise from simple systems? In that case, the intuition may lose some of its force.

Artificial intelligence in its current state by no means offers a physical account of human mental processes arising from simple systems, but it does make such an account imaginable and therefore it threatens the intuition I have just described. A neural network is a very simple structure that resides in the memory of a computer, yet it is capable of very complex behavior that looks like what we might call “learning.” No longer is artificial intelligence the exclusive domain of high-tech corporations or the N.S.A. Anyone with a basic knowledge of programming and a little understanding of calculus can program a neural network on their home computer.

So let’s imagine that a well-intentioned pastor tries to shore up his congregation’s faith in God as the creator by appealing to the intuition that cognitive processes are so complex that they can only be explained by a soul created by God. Let’s further imagine there are high school students in the congregation who have actually experimented with programming neural networks on their home computers. They realize that simple networks consisting of about 1,000 neurons can accurately achieve basic visual recognition. Are they going to share the pastor’s intuition? If a small network can learn to recognize digits written by humans, who knows what a network with a billion neurons could do?

It would be better for the pastor to realize from the outset that our natural intuition about complexity is a slender reed that may not bear the weight of an argument intended to prop up God. At its core, it is merely one example of the “God of the gaps” argument, which tries to maintain some role for God in an increasingly secular worldview by appealing to God to account for whatever science cannot explain. The problem is that the more science learns, the less room there is for God. Artificial intelligence, it seems to me, may someday close a significant gap.

As Lutherans, we hold that faith is based neither on apologetic argument nor on intuition but on the word of God. If we feel that our faith is threatened by science, it may be tempting to reach for easy arguments that seem obvious to us. The problem is that what seems obvious now may not seem so obvious in fifty years. In that case, we will have encouraged people to base their faith on a foundation that cannot bear the weight.

Only God’s word can bear that weight.

Leave a Reply

You must be logged in to post a comment.